🪬 Large Language Model Arms Race Gets Spicy As Amazon Invests $4bn in Anthropic [Claude 2]

"Data Was Born Free, Yet Everywhere it is in Chains" [inspired by Jean Jacques Rosseau]

Can a simple framework change your life?

Doubtful. But it can change the way you think. Which can change the way you act.

Here's a simple way to understand the potential Value Capture in the Ai space.

In the News: Amazon Invests $4bn in Anthropic’s LLM

Why it Matters:

Amazon is pouring up to $4B into Anthropic, an AI startup known for its chatbot Claude 2, escalating the cloud and AI arms race among big tech companies.

The Details:

Initial Investment: Amazon's first chunk of funding will be $1.25B, with an option to invest an additional $2.75B.

Stake: Amazon acquires a minority stake in Anthropic.

Strategic Collaboration: Amazon will integrate Anthropic's AI into its suite of products. This gives Amazon cloud customers and engineers early, specialized access to Anthropic's tech, such as model customization.

Tech Exchange: Anthropic will leverage Amazon's custom Trainium and Inferentia chips for building, training, and deploying its AI foundation models.

Financial Boost: Anthropic gets a hefty financial cushion for the expensive process of training and operating big AI models.

Cloud Provider: Amazon Web Services (AWS) will be Anthropic's main cloud provider.

The Big Picture:

Competitive Landscape: Google previously invested $300M in Anthropic and was named the startup's preferred cloud provider. Microsoft has invested a whopping $10B in Anthropic rival OpenAI.

Market Implications: The move intensifies competition in the AI and cloud markets, and hints at Amazon's broader ambitions in AI technology.

Contrarian View: With Amazon's financial might and reach, it could help Anthropic make rapid advancements in AI tech, potentially eclipsing rivals. However, the partnership raises questions about AI monopolies and how they could stifle innovation.

Next Steps: Look out for developments in product integrations and any shifts in cloud and AI market share.

Source: The FT, Inside Newsletter

My Parameter is Bigger Than Yours!

The largest LLMs as of September 26, 2023 are:

GPT-4 (OpenAI) - 1.7 trillion parameters

PaLM 2 (Google) - 540 billion parameters

Claude v1 (Anthropic) - 137 billion parameters

LLaMA (Meta) - 65 billion parameters

Orca (Meta) - 175 billion parameters

So Why Does Parameter Size Matter?

In machine learning, a Large Language Model (LLM) like GPT-3 or GPT-4 is a complex algorithm trained to understand and generate human-like text.

It has millions or even billions of "parameters," which are like adjustable settings in the model.

These parameters are fine-tuned during the training process to help the model understand the intricacies of language, context, and semantics.

Think of them as the individual skills you'd level up in a video game character; each parameter helps the model get better at a specific aspect of language.

The more parameters, the more capable the model is at understanding and generating text that's coherent and contextually relevant.

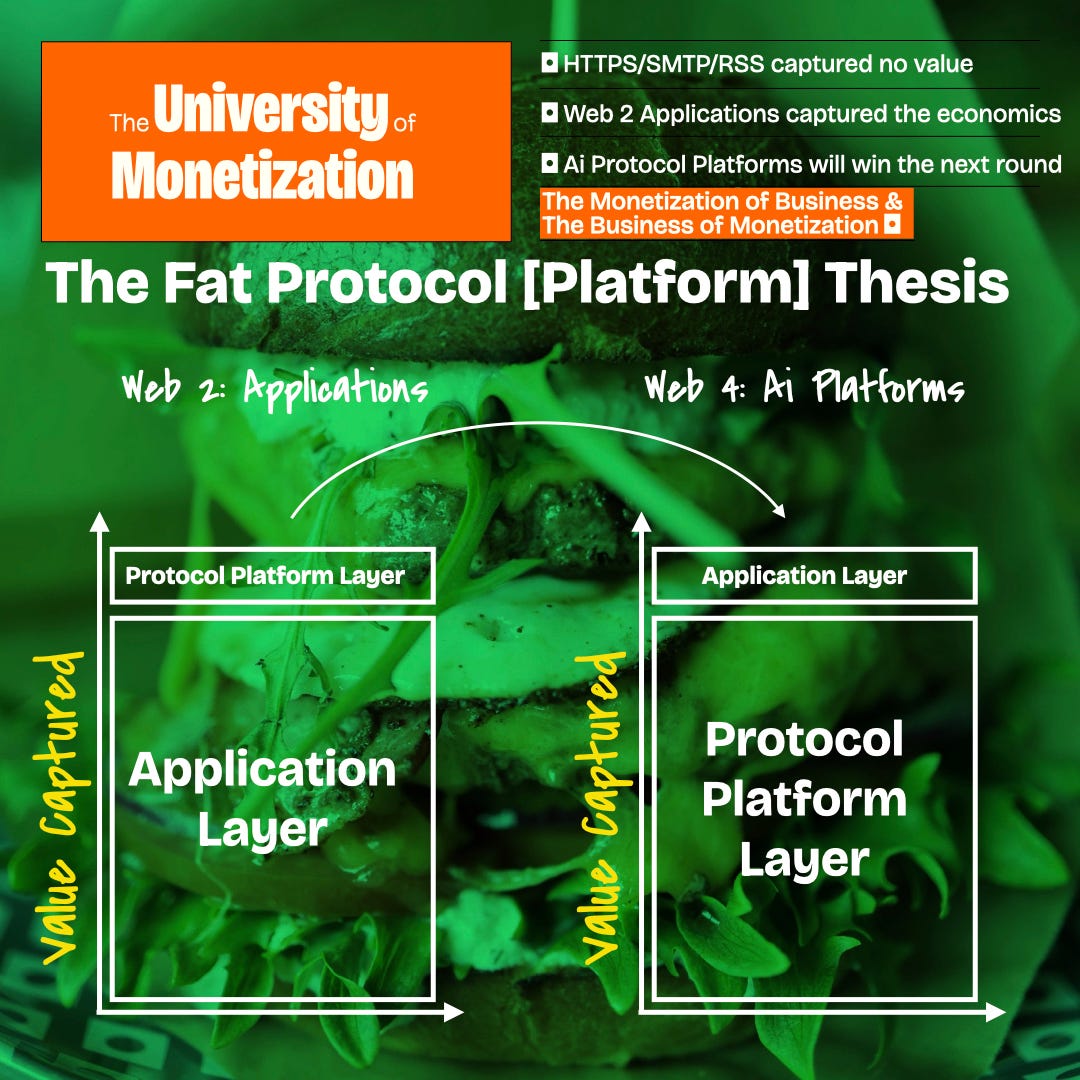

So What?: The Fat Protocol Thesis of Value Capture

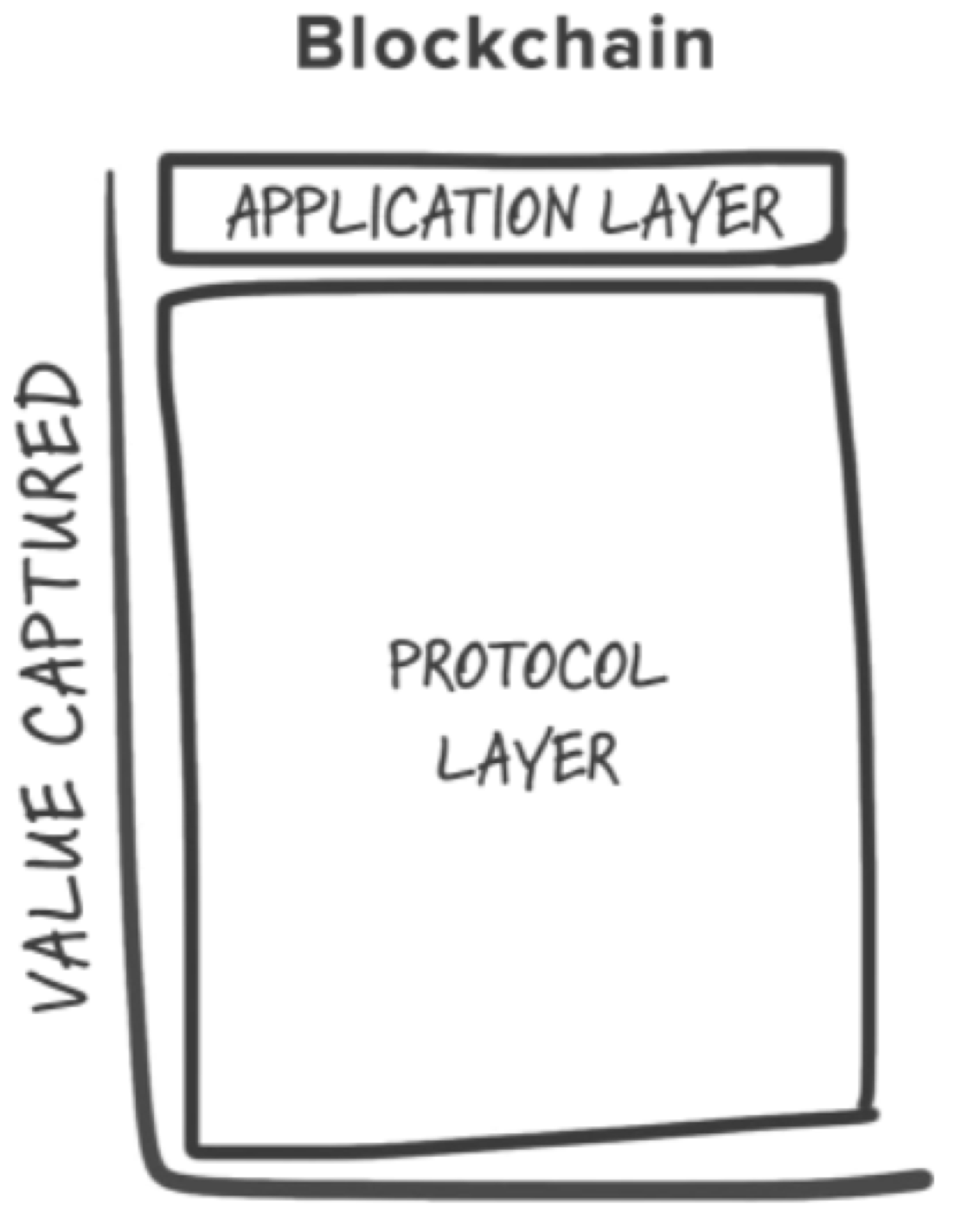

Joel Monegro’s Fat Protocol Thesis was first used to explain the value accrual that we’d expect to occur in Blockchain protocols.

But I find it perfect for analysing the current market structure building in Ai.

The Core Idea: The "Fat Protocol" thesis argues that in the blockchain world, most of the value will be captured at the protocol layer rather than the application layer.

This is a reversal from the Internet era, where companies like Google, Facebook, and Amazon captured the lion's share of value at the application layer.

Credits: Joel Monegro

Protocol vs Application Layer:

Protocol Layer: This is the foundational technology upon which applications are built. In the blockchain context, think of Bitcoin or Ethereum as protocols.

Application Layer: These are the end-user products built on top of protocols. For the Internet, think Gmail, Facebook, etc. In blockchain, these could be decentralized finance apps or NFT platforms.

Applied to Ai: Value Capture

Internet: Protocols like HTTP, TCP/IP, etc., enabled the web but captured little economic value. The applications (Facebook, Google, etc.) captured the vast majority of the value.

Ai Platforms: themselves will capture most of the value [as was the case with Blockchains]

This is because they are the base upon which applications will be built and grow.

This will lead to sticky customers and network effects style growth if the applications become successful.

Credits: Joel Monegro

Takeaway:

Value capture at the pure application layer will be much more difficult than in the Web 2 era as the powerful platform stack of Cloud Provider + Large Language Model will seek to collect most of the economics.

And that’s not even considering the huge pricing power of NVIDIA.

In reality, most of the applications will struggle to create economics much different to that of a marketing or Ad agency - i.e. 20/30% net margins.

Grab Your Framework Template Now:

Get Started: Don't just read about frameworks; execute them.

Click now to download and save the framework template.

Ps. If you have any great frameworks you’ve created or seen that you want featured in this newsletter, please shoot them over.

Bye.

Only Frameworks